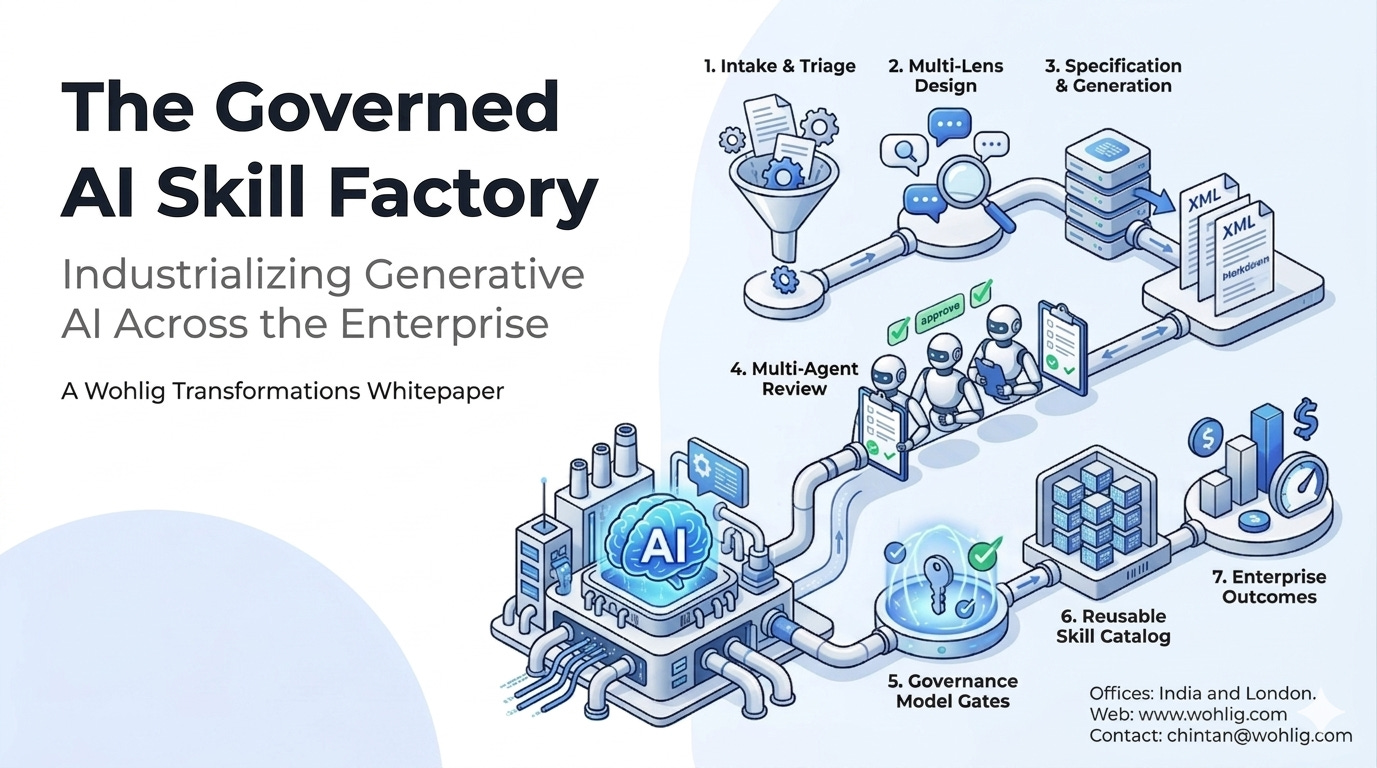

The Governed AI Skill Factory

Industrializing Generative AI Across the Enterprise

A Wohlig Transformations Whitepaper

Executive Summary

Enterprises are now past the question of whether to adopt generative AI. The new question is how to scale it safely. Most organizations get the first two or three AI use cases into production, then stall — held back by prompt sprawl, missing review processes, no versioning, and a growing risk surface that the CISO and CFO refuse to absorb.

Wohlig’s Governed AI Skill Factory is a platform pattern and engineering practice that turns the production of AI capabilities (we call them skills) into a repeatable, audited, multi-phase pipeline. It allows non-developers to specify new AI workflows safely, mass-produces those workflows with consistent quality, and gives the organization the audit, governance, and reuse it needs to operate AI at scale.

This paper describes the architecture, the engineering pipeline, the governance model, and the business outcomes Wohlig customers can expect.

1. The Problem: Why Enterprise AI Stalls After the First Wins

Wohlig has consistently observed five failure modes in enterprises that have crossed the AI proof-of-concept line:

Prompt sprawl. Dozens or hundreds of prompts live in scripts, notebooks, and Slack messages with no inventory or owner.

No review. Prompts go from a developer’s laptop to production with no formal quality gate. Hallucinations, prompt injections, and policy violations reach customers.

No versioning. A prompt that worked last quarter is silently degraded by a model upgrade. There is no way to roll back.

No reuse. Each team rebuilds the same RAG pattern, the same parser, the same summarizer — three to five times over.

No audit trail. When regulators or internal audit ask “what did this AI do, and on whose authority?” — the answer is a shrug.

The cost is real: failed pilots, blocked rollouts, abandoned investments, and growing executive scepticism after the initial enthusiasm.

2. The Solution Pattern: A Skill Factory

Wohlig’s Skill Factory is built on four principles:

Specifications, not prompts. Every AI capability is described in a structured spec (inputs, outputs, tools, guardrails, examples) before any code is written.

Pipelines, not heroics. Skills are produced through a deterministic multi-phase pipeline that any team can run.

Review by default. No skill ships without passing automated and multi-agent reviews.

Versioning end-to-end. Skills, prompts, evaluations, and rollouts are tracked the same way code and infrastructure are.

3. The Four-Phase Pipeline

Phase 1 — Intake and Triage

The factory accepts a natural-language request from any authorized user. A triage layer:

Classifies intent (new skill, extension, replacement).

Checks the existing skill catalog for overlap.

Captures required inputs, outputs, data scopes, and risk class.

This eliminates the “another team already built this” duplication problem on day one.

Phase 2 — Multi-Lens Design

Every candidate skill is run through a structured design review using eleven reasoning lenses, including:

First principles — what is the actual underlying need?

Inversion — what would make this skill obviously bad?

Pre-mortem — assume this fails in production. Why did it fail?

Systems thinking — what upstream/downstream systems are affected?

Adversarial — how would a malicious user abuse this?

The output is a hardened design document, not a prompt.

Phase 3 — Specification and Generation

The hardened design becomes a formal specification (machine-readable, e.g. XML or structured Markdown with frontmatter). The skill artifact — instructions, references, scripts, examples — is generated from the spec. Because generation is deterministic and spec-driven, a single source of truth governs both behavior and documentation.

Phase 4 — Multi-Agent Review

Specialized review agents check the generated skill in parallel:

Design reviewer — does the skill match the spec?

Usability reviewer — will the target user actually succeed?

Evolution reviewer — is this maintainable?

Script reviewer — are any tools or scripts safe?

A skill ships only when all reviewers approve. Failures are routed back to the appropriate phase with structured feedback.

4. Governance Model

The factory enforces governance the same way modern software platforms enforce CI/CD: through pipeline gates that cannot be bypassed.

Spec validation — Every skill must have a complete, parsable spec.

Policy review — PII handling, data egress, prompt-injection defenses.

Eval threshold — Skill must beat a quality bar on a held-out test set.

Cost ceiling — Per-call and per-month spend caps.

Multi-agent sign-off — All review agents must approve.

Human approval (high-risk) — Compliance officer countersign for risk-class 3+.

Versioned rollout — Canary, shadow, or blue-green deployment.

This is the governance layer that allows AI to enter regulated environments — BFSI, healthcare, public sector — without slowing innovation.

5. Reference Architecture

[ Intake UI ] ──▶ [ Triage Service ] ──▶ [ Skill Catalog Lookup ]

│

▼

[ Multi-Lens Design Engine ]

│

▼

[ Spec Repository (Git) ]

│

▼

[ Generation Service ] ──▶ [ Skill Artifact ]

│

▼

[ Review Agent Pool (parallel) ]

│

▼

[ Evaluation Harness ]

│

▼

[ Release Service ]

├─ canary / shadow / prod

└─ telemetry + cost guardrails

Wohlig deploys this on the customer’s chosen cloud (GCP, AWS) inside their own VPC. Identity flows through the customer’s existing SSO. Secrets sit in the customer’s secret manager. Telemetry flows into the customer’s existing observability stack.

6. Outcomes

In Wohlig engagements, customers consistently see:

70–90% reduction in time-to-ship a new AI workflow once the factory is operational.

Order-of-magnitude reduction in unreviewed prompts in production.

Documented audit trail for every AI capability — passes internal audit and regulator inquiry.

Reusable skill catalog that compounds in value across departments.

CFO-friendly cost discipline — every skill has a per-call ceiling and a monthly budget.

7. Engagement Model

Wohlig delivers the Skill Factory in three phases.

Phase A — Foundation (4–6 weeks). Stand up the factory infrastructure, spec format, and first three pilot skills. Train the customer’s AI CoE.

Phase B — Expansion (8–12 weeks). Migrate existing prompt assets into the factory. Onboard 3–5 departments. Establish the governance committee.

Phase C — Operate (ongoing). Wohlig stays involved at the level the customer wants — fully managed, hybrid, or pure advisory. Customers retain full ownership of skills, specs, and infrastructure.

8. About Wohlig

Wohlig Transformations is a digital transformation, cloud, and AI consulting firm founded in 2016. We have shipped 10+ generative-AI solutions in production, completed 20+ cloud migrations, and hold 40+ Google Cloud certifications including a Data Analytics specialization. We serve governments (Maharashtra, Gujarat, ONDC), enterprises (Lodha, Eros Now, Hungama), and high-growth consumer companies (Swiggy, Ninjacart, PW Live).

Offices: India and London. Web: www.wohlig.com

To discuss your AI scaling roadmap, reach Wohlig at chintan@wohlig.com.