Your AI Agents Get Dumber Every Month. Here's How to Make Them Compound Instead.

Wohlig Transformations · AI Engineering

There is a quiet failure mode in enterprise AI that nobody puts on

the slide deck. You build an agent. It works. It saves time. The

team is delighted. You build five more. Three months later, half of

them are silently broken — an upstream API changed a field, a tool

schema drifted, an edge case nobody anticipated has crept into

production. The team is back to doing the work manually. Token

spend is up. Confidence in AI is down. The CFO is asking pointed

questions.

This is the agent decay problem, and it is the single biggest

reason enterprise AI initiatives lose momentum after the first wave

of wins.

The other reason — even more expensive — is token-bill creep.

Every agent invocation re-derives the same reasoning the agent did

yesterday. The model thinks through the tax filing logic from

scratch. Then thinks through the compliance check from scratch. Then

thinks through the contract clause from scratch. Multiply by ten

thousand invocations a month, and you are paying for the same

thinking, repeatedly, in perpetuity.

Wohlig builds the fix. We call it a Self-Improving Agent

Platform.

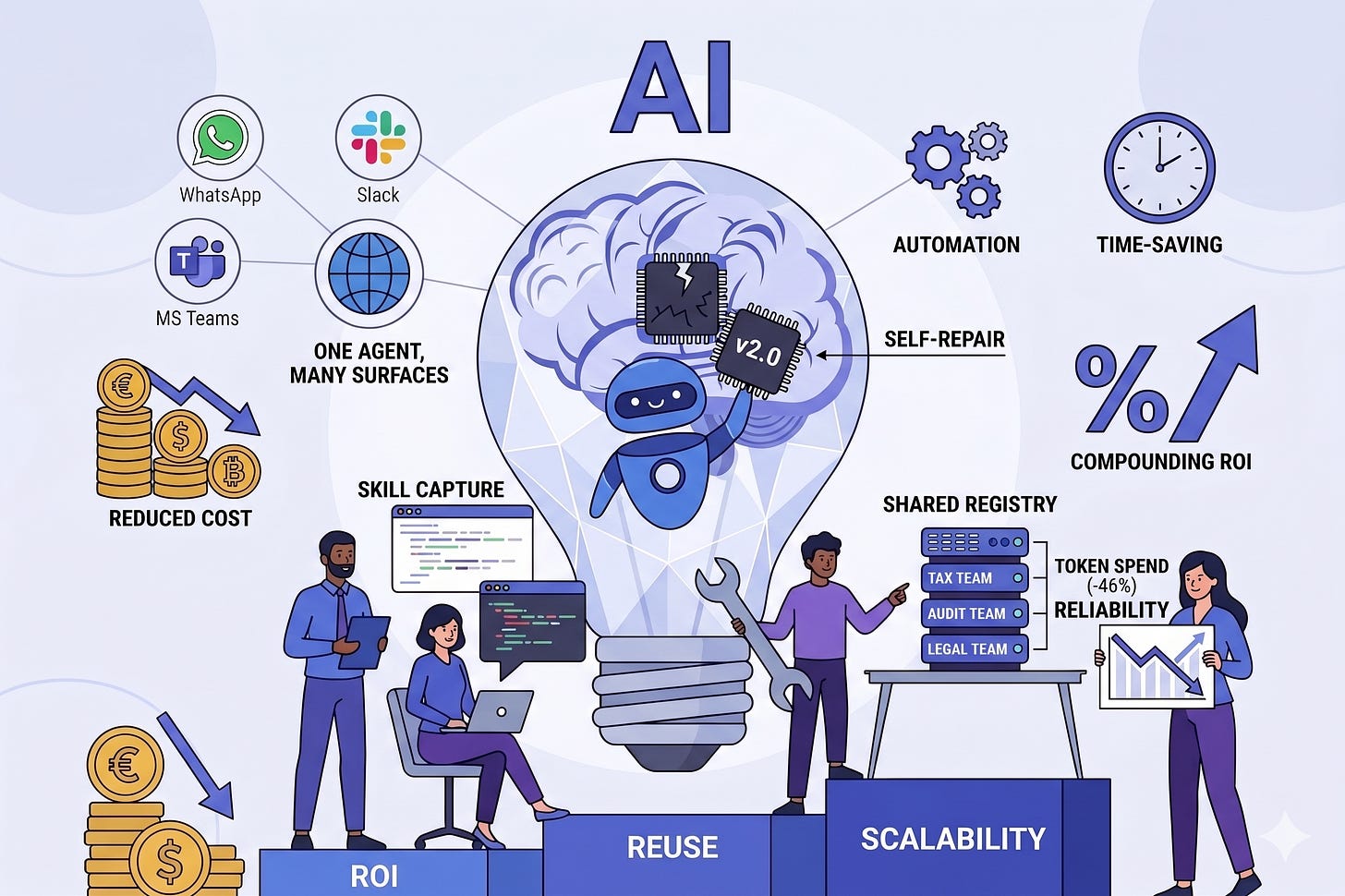

The pattern

Three properties make agents compound rather than decay:

1. Skill capture. When an agent successfully completes a task,

the workflow is captured as a named, versioned, reusable skill.

The next time a similar task arrives, the agent does not re-reason

from scratch — it retrieves the skill and runs it. This alone cuts

token spend by 30–50%, with the savings compounding as the skill

library grows.

2. Self-repair. Skills are monitored continuously. When a skill

fails — because a tool changed, a schema drifted, or an edge case

appeared — the platform diagnoses the failure and patches the skill

automatically. No engineer chasing the regression. No quiet decay.

The agent is back online while the team is still at lunch.

3. Shared registry. Skills are stored in a governed registry

that every agent in the organization can read from. When the tax

team’s agent learns to handle a new GST schema, the audit team’s

agent and the legal team’s agent benefit immediately. One team’s

work compounds into capability for everyone else.

The result is what AI was always supposed to deliver: capability

that grows with use, instead of decaying.

What changes for the business

Token spend collapses. Industry data on this pattern shows

roughly 46% reduction in token usage. For an enterprise running

serious agent workloads, that is real money — and the savings grow

with adoption rather than shrinking.

Reliability stops being a fire drill. Agents that used to

silently break and trigger emergency engineering investigations now

self-heal. The engineering team focuses on building new capability

rather than maintaining last year’s.

Knowledge stops being trapped. The clever agent the marketing

team built that nobody else knows about now lives in the registry,

discoverable, reusable, and auditable. Capability accumulates across

the organization.

ROI becomes measurable. Every skill carries execution history,

version lineage, and cost-per-run metrics. When the CFO asks “what

did we save with AI this quarter?” — the answer is a real number

with a permalink.

One agent, many surfaces. A skill written once works whether

the agent is invoked from WhatsApp, Slack, Microsoft Teams, an

internal portal, a CLI, or a desktop integration. The platform

abstracts away the surface — your customers and employees use it

where they already are.

Where this works

This is not for a team running their first AI pilot. This is for

organizations that have already shipped multiple AI workflows and

are now staring at:

A token bill that has climbed every month for six months.

A backlog of “agent X is broken again” tickets.

A growing realization that the same capability is being rebuilt

three times in three departments.

If that sounds familiar — particularly in BFSI, professional

services, BPO/KPO, or document-heavy enterprises — the

self-improving pattern is the next architectural step.

Where Wohlig fits

We do four things:

Build the platform. The skill engine, the registry, the

self-repair loop, the multi-surface gateway, the dashboard.

Deployed inside your cloud, governed by your identity, audited

by you.Migrate your existing agents. Whatever you have built so far

— copilots, chatbots, automation scripts — gets wrapped as

skills, versioned, and brought into the registry.Train your AI team. Engineers and domain experts get the

patterns and the playbooks for authoring durable skills.Operate it with you. As much or as little as you want —

managed service, hybrid, or pure advisor.

The honest summary

The first wave of enterprise AI was about getting agents into

production. The second wave — the one that actually delivers ROI —

is about making those agents compound rather than decay. Skill

reuse cuts cost. Self-repair preserves reliability. A shared

registry turns one team’s wins into the whole organization’s

capability.

Wohlig builds this. If your AI program is at the stage where the

costs are obvious and the returns are getting harder to point to,

this is what comes next.

Wohlig Transformations builds AI, cloud, and data platforms for

governments, enterprises, and high-growth startups. 10+ generative-AI

solutions in production. Founded 2016. Offices in India and London.